For decades, the field of artificial intelligence has been constrained by the very hardware it runs on. Traditional computers, built on the Von Neumann architecture of separate processing and memory units, are incredibly fast and efficient for tasks like data processing and calculations. However, they are fundamentally ill-suited for the kind of fluid, parallel, and low-power processing that defines the human brain. This mismatch between hardware and algorithm has created a bottleneck for the development of a truly intelligent AI, leaving many of the most ambitious projects in the realm of theory. But a profound shift is now underway.

A new generation of researchers and engineers is turning away from traditional computing in favor of a new paradigm that is directly inspired by biology. Neuromorphic chips—a revolutionary new class of hardware—are being designed to mimic the structure and function of the human brain. These chips promise to unlock a new era of AI that is not just more powerful, but also more energy-efficient, more adaptive, and more capable of learning in real-time. This is not just a technological advancement; it is a fundamental re-imagining of computation itself, poised to revolutionize robotics, edge AI, and our understanding of the brain. This article will provide a comprehensive guide to the principles of neuromorphic computing, the key architectural features that define it, the major commercial applications that are driving its development, and the challenges and future of this transformative technology.

The Brain-Inspired Revolution

To understand the power of neuromorphic computing, one must first grasp the difference between it and a traditional computer. A traditional computer is a sequential machine. It performs one calculation at a time, moving data between a central processing unit (CPU) and a memory unit. This constant movement of data, known as the Von Neumann bottleneck, is a major source of energy consumption and a significant limitation for AI.

A neuromorphic chip, by contrast, is a parallel machine. It is designed to mimic the structure and function of the human brain, which is a massive network of interconnected neurons. A neuron in the human brain does not perform calculations in a sequential fashion; it processes information in parallel, and it communicates with other neurons through a series of electrical pulses, or “spikes.” A neuromorphic chip is designed to replicate this process. It is a massive network of interconnected “neurons” and “synapses” that can process information in parallel, with each neuron performing a simple calculation and communicating with other neurons through a series of electrical pulses. This parallel, event-driven architecture is the source of its immense power and its unparalleled energy efficiency.

Key Architectural Principles of Neuromorphic Chips

The modular robotics revolution is built on a set of core principles that are fundamentally changing the way we think about automation. These principles are making robotics more accessible, more flexible, and more collaborative than ever before.

A. Spiking Neural Networks (SNNs):

This is the most important concept in neuromorphic computing. Unlike a traditional artificial neural network, which processes information in a continuous fashion, a spiking neural network (SNN) processes information in a discrete fashion, using a series of electrical pulses, or “spikes,” to communicate between neurons. A neuron in an SNN only “fires” when it receives enough electrical pulses from other neurons, a process that is a close replica of the way a neuron in the human brain functions. This event-driven, sparse communication is the source of a neuromorphic chip’s unparalleled energy efficiency.

B. Parallel Processing and Massive Interconnectivity:

A traditional computer has a handful of powerful cores that perform calculations in a sequential fashion. A neuromorphic chip, by contrast, is designed with a massive number of interconnected “neurons” and “synapses” that can process information in parallel.

- Massive Parallelism: A neuromorphic chip can have hundreds of thousands of “neurons” and billions of “synapses,” all working together at the same time. This massive parallelism allows a neuromorphic chip to perform a variety of complex calculations simultaneously, which makes it ideal for a variety of AI applications.

- Event-Driven Communication: The communication between the neurons in a neuromorphic chip is event-driven. A neuron only communicates with other neurons when it receives enough electrical pulses to “fire.” This sparse communication is the source of a neuromorphic chip’s unparalleled energy efficiency.

C. In-Memory Computing:

In a traditional computer, the CPU and memory are separate, which means that a significant amount of energy is consumed by moving data between the two. A neuromorphic chip is designed with an in-memory computing architecture, which means that the processing and memory are located closer together. This significantly reduces the amount of energy consumed by moving data, which makes a neuromorphic chip more energy-efficient than a traditional computer.

Major Commercial Applications and Use Cases

The potential applications of neuromorphic computing are not just academic; they are poised to revolutionize some of the world’s most critical industries.

- Robotics and Autonomous Systems: The robots of today are often slow, clumsy, and require a significant amount of power to function. A neuromorphic chip, with its ability to process information in parallel and with unparalleled energy efficiency, could enable a new generation of robots that can learn in real-time and make decisions with very little power. This could lead to a new era of robots that are more agile, more efficient, and more capable of working in the real world.

- Edge AI and IoT Devices: The Internet of Things (IoT) is a vast network of interconnected devices, from smart cameras to sensors. These devices are often small and low-power, and they need to be able to perform AI tasks on the edge, without the need for a connection to a cloud-based server. A neuromorphic chip, with its unparalleled energy efficiency, is ideal for these applications. It can, for example, enable a smart camera to detect a person’s presence with very little power, or a sensor to detect a security threat in real-time.

- Brain-Computer Interfaces (BCIs): This is one of the most futuristic and ambitious applications of neuromorphic computing. Researchers are developing BCIs that can allow a person to control a computer with their minds. A neuromorphic chip, with its ability to mimic the function of the human brain, is the ideal hardware for these applications. It can, for example, be used to help a person with a disability to control a prosthetic limb with their minds, or to help a person with a neurological condition to communicate with a computer.

- Data Centers and Cloud Computing: While neuromorphic chips are often associated with edge AI, they also have a role to play in data centers and cloud computing. A neuromorphic chip can be used to supercharge AI in a data center, leading to more powerful and efficient models. It can, for example, be used to accelerate the training of a large language model or to analyze a vast amount of data in real-time.

Key Players and Challenges

While the potential of neuromorphic computing is immense, the industry is still in its early stages. There are a number of significant technical and commercial hurdles that must be overcome before it can become a mainstream commercial tool.

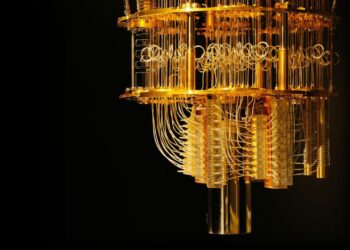

- The Role of Key Companies: Companies like IBM (TrueNorth), Intel (Loihi), and Qualcomm are leading the charge, investing billions in neuromorphic research and development. They are building a new generation of chips and software that are designed to mimic the function of the human brain.

- Software and Algorithm Development: The biggest challenge for neuromorphic computing is the lack of software and algorithms that are specifically designed for its architecture. Most of today’s AI software is designed for a traditional computer, and it is a significant challenge to port it to a neuromorphic chip.

- The Talent Gap: The field of neuromorphic computing requires a highly specialized set of skills in computer science, neuroscience, and engineering. There is a significant talent gap in the industry, and universities and companies are now racing to train a new generation of neuromorphic engineers and programmers.

Conclusion

The emergence of neuromorphic chips is not just another technological advancement; it is a fundamental re-imagining of computation that will reshape AI, robotics, and our understanding of the human brain. The old model of traditional computing, with its reliance on sequential processing and its immense energy consumption, is being challenged by a new one that is inspired by the parallel, low-power processing of the human brain.

The companies and governments that are leading this charge are not just building a new technology; they are laying the foundation for a new era of human-inspired technology. The future of AI will not be defined by a world where humans are replaced by machines. Instead, it will be defined by a world where humans and machines work together to create a more efficient, more creative, and more equitable world. The neuromorphic revolution is here, and its arrival will fundamentally change our understanding of what is possible.

Discussion about this post